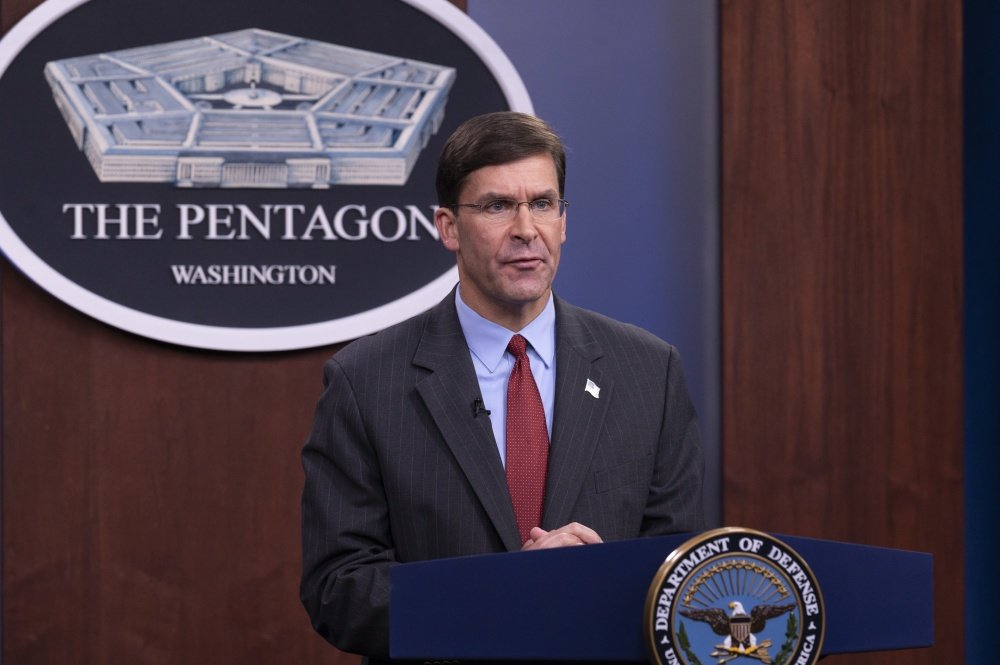

Esper Says Air Combat Showdown Between Human and AI Pilots Will Happen in 2024

Secretary of Defense, Dr. Mark T. Esper records statement for virtual A.I. Symposium in the Pentagon Briefing Room September 8, 2020. DoD photo by Marvin Lynchard, via DVIDS.

Fight’s on.

US Secretary of Defense Mark Esper threw down the gauntlet on Wednesday, declaring that within four years human fighter pilots would go mano a mano against artificial intelligence adversaries piloting tactical aircraft.

It’s a tall order, but the development of artificial intelligence, or AI, technology for military use has become a cornerstone of the burgeoning arms race between China, Russia, and the US in this new era of so-called great power competition. America’s military advantage over its adversaries is eroding, many experts warn, and to reverse that trend, Washington is increasingly committing resources to the development of AI technology for combat.

In his Wednesday remarks, Esper said that AI technology would revolutionize the future of warfare in the same way that precision-guided weapons and stealth technology gave the US military a seemingly insurmountable advantage over its adversaries in the decades following Operation Desert Storm and the Soviet Union’s collapse.

“History informs us that those who are first to harness once-in-a-generation technologies often have a decisive advantage on the battlefield for years to come,” Esper said in prepared remarks delivered to the Department of Defense’s Joint Artificial Intelligence Symposium, according to an article posted to the Pentagon’s website.

“I experienced this firsthand during Operation Desert Storm, when the United States’ military’s smart bombs, stealth aircraft and satellite-enabled GPS helped decimate Iraqi forces and their Soviet equipment,” Esper said.

Three weeks ago, an artificial intelligence algorithm defeated a top US Air Force fighter pilot 5-0 during a series of simulated F-16 fighter dogfights conducted in a virtual reality trainer. Esper said last month’s exercise demonstrated the “tectonic impact of machine learning” on the future of air combat.

“The AI agent’s resounding victory demonstrated the ability of advanced algorithms to outperform humans in virtual dogfights. These simulations will culminate in a real-world competition involving full-scale tactical aircraft in 2024,” Esper said Wednesday.

Eight teams were selected last year to compete in the Defense Advanced Research Projects Agency’s AlphaDogfight Trials, which lasted from Aug. 18 to 20 and were meant to showcase the ability of advanced AI algorithms to conduct simulated within-visual-range air combat maneuvering — better known as the “dogfight.”

During those tests, an AI algorithm produced by Heron Systems repeatedly prevailed over its human adversary — a fighter pilot from the District of Columbia Air National Guard with 2,000 hours of flight time.

While last month’s dogfighting showdown was undoubtedly a game changer for the future of air combat, it was only, in the end, simulated combat. And any simulation, no matter how well conceived, inevitably excludes details that could affect the overall outcome of an air combat encounter in the real world.

Justin Mock of DARPA, a fighter pilot who commentated a webcast of the Aug. 20 trial, said the AI pilot had a “superhuman aiming ability.”

“There’s a long way to go. This was a far cry from going out in an F-16 and flying actual [basic fighter maneuvers],” Mock said. “But I think we made a really large step, a giant leap if you will, in the direction we’re going.”

Similar to the creation of nuclear weapons in the last century, the development of AI technology for combat raises questions regarding the ethical use of such novel technology in war. Chief among those concerns is the fact that AI technology could, in theory, take humans out of the so-called kill chain of military operations — thereby leaving decisions on the just use of lethal force up to computer algorithms.

AI advocates, however, argue that humans have a long history of committing war crimes against members of their own species, and the introduction of emotionally inert machines on the battlefield may actually, in the long run, reduce unnecessary deaths in war.

“Autonomous armed robotic vehicles do not need to have self-preservation as a foremost drive, if at all. They can be used in a self sacrificing manner if needed and appropriate without reservation by a commanding officer,” wrote Ronald Arkin, regents’ professor and director of the Mobile Robot Laboratory at the Georgia Institute of Technology, in a report for the Institute of Electrical and Electronics Engineers.

“There is no need for a ‘shoot first, ask-questions later’ approach, but rather a ‘first-do-no-harm’ strategy can be utilized instead,” Arkin wrote about AI-controlled combat platforms. “They can truly assume risk on behalf of the noncombatant, something that soldiers are schooled in, but which some have difficulty achieving in practice.”

Moreover, many technology experts say AI algorithms won’t immediately replace human pilots altogether. Rather, a fleet of AI-piloted, “loyal wingman” aircraft may be created to accompany human-piloted warplanes through the combat environment. Human fighter pilots, in such a scenario, would be somewhat analogous to football quarterbacks calling plays for their teammates to execute.

America’s contemporary adversaries are also funneling resources into developing AI systems for combat. The Chinese Communist Party is determined to become the AI world leader in 10 years, and Russian President Vladimir Putin has said the nation that leads in AI will be the “ruler of the world.”

“Unlike advanced munitions or next-generation platforms, artificial intelligence is in a league of its own, with the potential to transform nearly every aspect of the battlefield, from the back office to the front lines,” Esper said. “That is why we cannot afford to cede the high ground to revisionist powers intent on bending, breaking or reshaping international rules and norms in their favor — to the collective detriment of others.”

China has been heavily investing in advanced weapons, including AI, in a bid to challenge American military dominance. China is also developing new nuclear weapons, missiles, warships, and stealth drones.

Some US defense experts have sounded the alarm on China’s AI development program, warning that in its bid to “leapfrog” the US, China may create dangerous new weapons systems with unintended or unplanned consequences. For his part, Esper warned that China could exploit advanced AI technology to oppress its own citizens.

“Beijing is constructing a 21st-century surveillance state designed to wield unprecedented control over its own people,” Esper said. “As China scales this technology, we fully expect it to sell these capabilities abroad, enabling other autocratic governments to move toward a new era of digital authoritarianism.”

Unlike America’s adversaries, Esper said, the US military won’t rush such technology to the front lines. The Pentagon has already adopted a set of ethical standards for the use of AI technology in warfare, Esper added.

“These principles make clear to the American people — and the world — that the United States will once again lead the way in the responsible development and application of emerging technologies, reinforcing our role as the global security partner of choice,” Esper said.

More than 200 civil service and military professionals now work at the Pentagon’s Joint Artificial Intelligence Center, developing AI technology for military and civilian use.

BRCC and Bad Moon Print Press team up for an exclusive, limited-edition T-shirt design!

BRCC partners with Team Room Design for an exclusive T-shirt release!

Thirty Seconds Out has partnered with BRCC for an exclusive shirt design invoking the God of Winter.

Lucas O'Hara of Grizzly Forge has teamed up with BRCC for a badass, exclusive Shirt Club T-shirt design featuring his most popular knife and tiomahawk.

Coffee or Die sits down with one of the graphic designers behind Black Rifle Coffee's signature look and vibe.

Biden will award the Medal of Honor to a Vietnam War Army helicopter pilot who risked his life to save a reconnaissance team from almost certain death.

Ever wonder how much Jack Mandaville would f*ck sh*t up if he went back in time? The American Revolution didn't even see him coming.

A nearly 200-year-old West Point time capsule that at first appeared to yield little more than dust contains hidden treasure, the US Military Academy said.